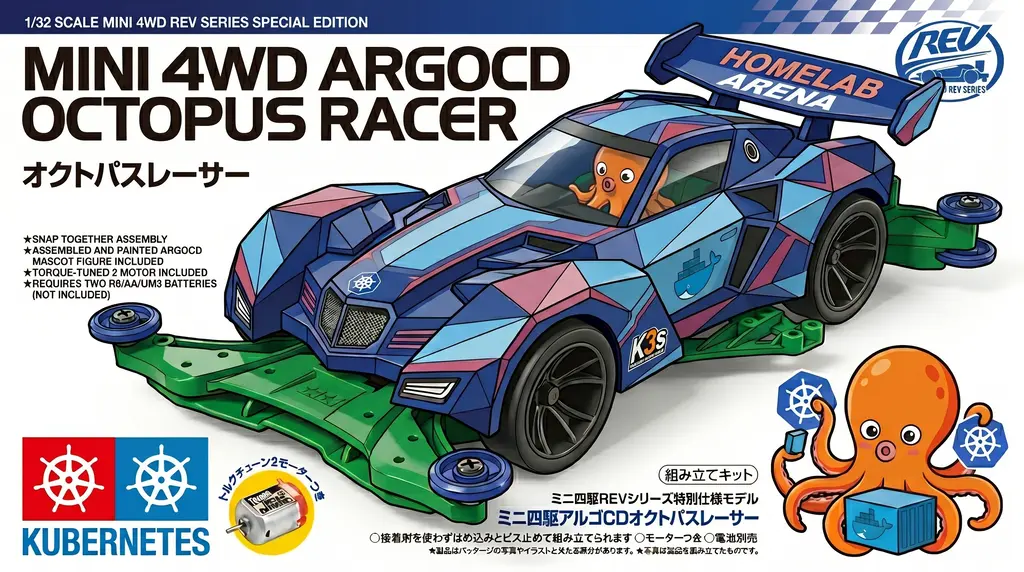

Homelab Arena Part 5: Terraform, Ansible, and k3s on Proxmox

Part 3 rebuilt the network.

Part 4 gave me a clean Ubuntu cloud template I could trust.

Part 5 is where those two pieces finally stop feeling like preparation and start feeling like a platform.

This is the moment the template turns into real machines. Terraform clones the VMs. Cloud-init gives them fixed identity on the LAN. Ansible installs k3s in the only order that makes sense: control plane first, then workers. And the whole thing ends with the one command that tells you whether the plan actually worked:

kubectl get nodesThat was the outcome I was chasing the whole time.

The lesson I carried forward

One of the more awkward parts of my earlier OCI k3s rebuild was token handling.

The workers needed a join token that only existed after the control plane was already alive. So the automation had to dance around that fact: wait for the server, fetch the token, pass it along, then let the workers join. It worked, but it always felt like I was working around the system instead of designing it cleanly.

I did not want to repeat that here.

So in this version, Terraform creates the cluster token up front. The server uses it. The workers use it. Nobody has to log into the master to retrieve anything later.

That change sounds small, but it removes a surprising amount of friction.

resource "random_password" "k3s_cluster_token" {

length = 48

special = false

}Once I had that, the rest of the flow became much easier to reason about.

How the pieces fit together

At a high level, this part is pretty simple:

cloud template --> terraform apply --> VMs (master + workers)

|

v

network_dns --> cloud-init --> guest resolvers

|

v

ansible site.yml --> k3s (servers, then agents)That simplicity is part of the payoff.

The Ubuntu template from Part 4 gives me a clean starting point. Terraform is responsible for creating the machines. Cloud-init handles first-boot identity and networking. Ansible takes over once the VMs exist and installs the cluster in sequence.

Each layer has a job. None of them have to do too much.

Using the network prepared by the Network Server from Part 3

Part 3 matters a lot more here than it might seem at first.

That network rebuild was not just cleanup. It was the point where the homelab started behaving like one system again. pfSense became the router, Pi-hole handled LAN DNS, and the Proxmox host finally lived inside a layout that made sense.

So when I started building the k3s nodes, I did not want them to come up in some disconnected bubble. I wanted them to inherit that same network logic.

Terraform is not managing Pi-hole directly. It is not opening the UI or writing DNS records through an API. What it does do is tell each VM, through cloud-init, which resolvers to use.

In this repo, that starts with network_dns:

variable "network_dns" {

type = list(string)

default = ["10.0.0.98", "1.1.1.1"]

description = "DNS servers for cloud-init."

}And that value gets pushed straight into the guest initialization block:

initialization {

dns {

servers = var.dns_servers

}

ip_config {

ipv4 {

address = "${var.ipv4_address}/${var.ipv4_prefix}"

gateway = var.ipv4_gateway

}

}

user_account {

username = var.ci_user

keys = var.ssh_public_keys

}

}That is the part I like most: the k3s VMs come up already using the same DNS direction the rest of the homelab was designed around. Pi-hole first, public resolver second. Nothing special, just consistent.

One Terraform module, reused properly

At the Proxmox layer, the master and workers are not really different kinds of machines.

They all come from the same Ubuntu template. They all need CPU, memory, storage, a bridge, static networking, and SSH access. The main differences are hostname, VM ID, IP address, and whether the node is tagged as control plane or worker.

That made a single reusable module the obvious choice.

The master is one instance of that module:

module "k3s_master" {

source = "../../modules/proxmox_ubuntu_node"

name = "k3s-master"

proxmox_node_name = var.pm_node

vm_id = var.master_vm_id

template_vmid = var.pm_template_vmid

clone_datastore_id = var.pm_disk_storage

network_bridge = var.pm_bridge

ipv4_address = var.master_ipv4

ipv4_prefix = var.ipv4_prefix

ipv4_gateway = var.network_gateway

dns_servers = var.network_dns

ssh_public_keys = [trimspace(var.ssh_public_key)]

tags = ["k3s", "control-plane"]

cpu_cores = var.master_cpu_cores

memory_mb = var.master_memory_mb

}And the workers are just that same shape, expanded with for_each:

module "k3s_workers" {

source = "../../modules/proxmox_ubuntu_node"

for_each = var.worker_nodes

name = each.key

proxmox_node_name = var.pm_node

vm_id = each.value.vm_id

template_vmid = var.pm_template_vmid

clone_datastore_id = var.pm_disk_storage

network_bridge = var.pm_bridge

ipv4_address = each.value.ipv4

ipv4_prefix = var.ipv4_prefix

ipv4_gateway = var.network_gateway

dns_servers = var.network_dns

ssh_public_keys = [trimspace(var.ssh_public_key)]

tags = ["k3s", "worker"]

cpu_cores = var.worker_cpu_cores

memory_mb = var.worker_memory_mb

}That is a much better place to be than copying VM blocks over and over.

If I want more workers, I do not fork infrastructure code. I update the worker map.

Predictable addresses from the start

I did not want this cluster relying on “whatever DHCP gave me today.”

That is fine for throwaway testing. It is not fine when the control plane address matters, when agents need a stable join target, and when you want DNS and inventory to line up without guesswork.

So the control plane gets a fixed IP, and the workers live in an explicit map:

variable "master_ipv4" {

type = string

default = "10.0.1.150"

description = "k3s server static IP."

}

variable "worker_nodes" {

type = map(object({

vm_id = number

ipv4 = string

}))

description = "Worker host key => { vm_id, ipv4 }. Defaults match 10.0.1.151–155."

default = {

"k3s-worker-1" = { vm_id = 9151, ipv4 = "10.0.1.151" }

"k3s-worker-2" = { vm_id = 9152, ipv4 = "10.0.1.152" }

"k3s-worker-3" = { vm_id = 9153, ipv4 = "10.0.1.153" }

"k3s-worker-4" = { vm_id = 9154, ipv4 = "10.0.1.154" }

"k3s-worker-5" = { vm_id = 9155, ipv4 = "10.0.1.155" }

}

}That makes the whole setup easier to reason about.

The master always lives where I expect it to. The workers are easy to count, resize, or remove. And Terraform, cloud-init, and Ansible all stay aligned around the same addresses.

Terraform creates what Ansible will need later

Once the VMs are defined, Terraform is not just building infrastructure. It is also preparing the values the next layer will need.

That is the clean part of this design.

After terraform apply, I can pull the cluster token directly from output:

terraform output -raw k3s_cluster_tokenThat value can go into Ansible group vars, or I can pass it in once from the command line.

Either way, the secret is already known before any install step starts.

That turns the workflow into something very straightforward:

- Terraform clones the VMs

- Terraform already knows the cluster token

- Ansible installs the k3s server

- Ansible installs the agents using the same token

No extra lookup step. No scraping a file from the master. No awkward wait loop.

Ansible handles the order that actually matters

Once the machines are up, Ansible takes over.

This is where I wanted the automation to stay boring in the best possible way.

The playbook does not try to be clever. It just enforces the order that has to happen anyway: control plane first, then agents.

---

# Order: control plane first so agents can join an API that exists.

- name: Install k3s control plane

hosts: k3s_servers

gather_facts: true

pre_tasks:

- name: Require k3s_cluster_token (from Terraform or Vault)

ansible.builtin.assert:

that:

- k3s_cluster_token is defined

- (k3s_cluster_token | length) > 0

fail_msg: >-

Define k3s_cluster_token (copy ansible/inventory/group_vars/all/secrets.yml.example to secrets.yml

and set the value from: terraform output -raw k3s_cluster_token).

roles:

- role: k3s_server

- name: Install k3s agents

hosts: k3s_agents

gather_facts: true

pre_tasks:

- name: Require k3s_cluster_token and k3s_server_url

ansible.builtin.assert:

that:

- k3s_cluster_token is defined

- (k3s_cluster_token | length) > 0

- k3s_server_url is defined

- (k3s_server_url | length) > 0

fail_msg: >-

Set k3s_cluster_token (inventory/group_vars/all/secrets.yml) and k3s_server_url (inventory/group_vars/k3s_agents.yml).

roles:

- role: k3s_agentThat is the separation I wanted all along:

- Terraform handles infrastructure

- cloud-init handles first boot identity and networking

- Ansible handles configuration and install order

- k3s is just the software being laid down on top

When each piece stays in its lane, the whole thing becomes easier to debug and easier to extend.

The proof that it worked

At the end of all of this, there is only one result I actually care about.

Not the HCL. Not the playbook. Not the outputs.

The result is whether the cluster comes up cleanly.

So the final check is still the simplest one:

kubectl get nodesAnd when it works, it looks like this:

NAME STATUS ROLES AGE VERSION

k3s-master Ready control-plane 5m v1.34.6+k3s1

k3s-worker-1 Ready <none> 5m v1.34.6+k3s1That is the moment where the rebuild stops feeling theoretical.

After the storage work, the network rebuild, and the template cleanup, this was the first point where it genuinely felt like I had a platform again.

Next: GitOps

With the first k3s cluster standing on Proxmox, the next step is not just adding more nodes.

The next step is changing how the cluster gets managed.

That is where Argo CD comes in.

Part 6 is where the project starts moving away from “installing things by hand” and toward declaring the cluster state in Git and letting the platform own its own drift.