GKE - Part 4: ExternalDNS, cert-manager, and Real URLs for the GitOps Platform

A heads up before getting into the details: this is a longer step.

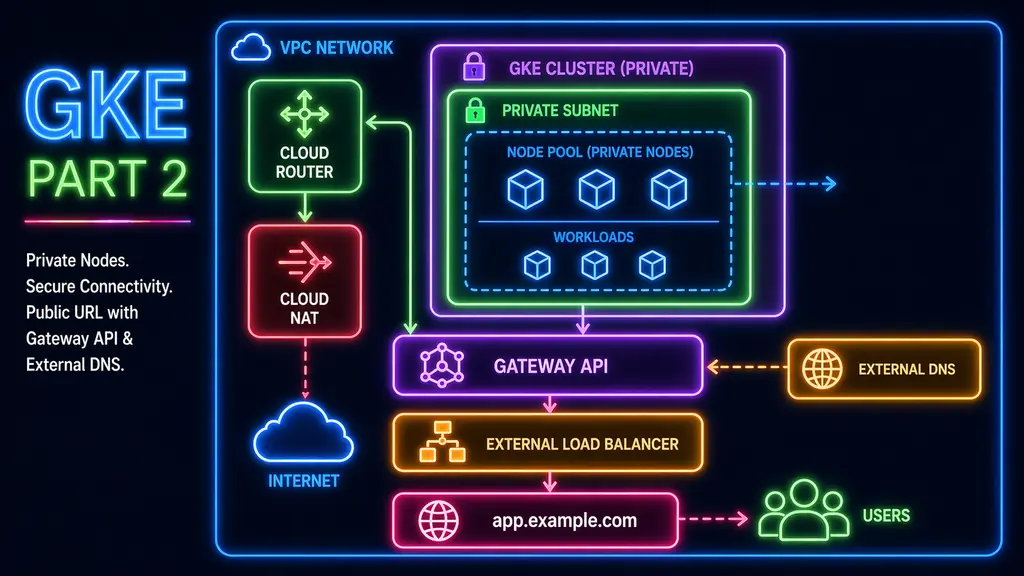

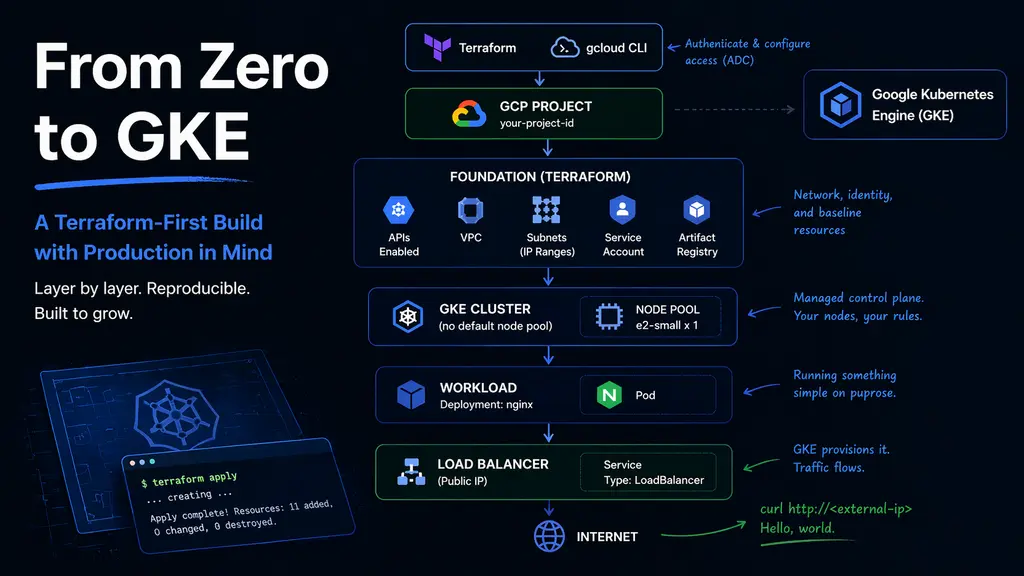

The previous parts built the cluster shape. This part starts turning that cluster into a platform. The goal is no longer just to prove that a pod can run or that a Gateway can receive traffic. The goal is to make the edge of the cluster behave like something that can be reused: DNS records created automatically, certificates issued automatically, and platform tools available at real hostnames instead of temporary IP addresses.

By the end of this step:

- ExternalDNS manages Cloud DNS records from Gateway API resources

- cert-manager issues Let’s Encrypt certificates using Cloud DNS DNS-01

- Argo CD is reachable at a real HTTPS URL

- a sample application is exposed through Gateway API with DNS and TLS

- platform controllers run on the system node pool

- the sample app runs on the spot node pool

This post does not cover installing Argo CD from scratch. For that bootstrap flow, check Homelab Arena Part 6. Here, Argo CD already exists, is self-managed, and is being used to install the next layer of platform components.

Where this starts

In the earlier GKE work, the cluster moved away from direct service exposure. Instead of using a LoadBalancer service per application, Gateway API became the entry point for inbound traffic. That gave the cluster a cleaner edge, but DNS still had a manual step: create a hostname, copy the Gateway IP, and create an A record by hand.

That was fine for proving the Gateway worked. It was not good enough for a GitOps platform.

The previous structure also separated shared resources from environment-specific resources. DNS zones live in the shared layer, while the GKE cluster, node pools, and environment resources live under nonprod or prod. Terraform is applied through CI with environment-specific roots, so this new layer follows the same pattern.

The GitOps repository also already has an important design decision: nonprod and prod each have their own Argo CD instance. Each instance reads from its own folder in the repo, which lets nonprod act as the staging ground for platform changes before prod is touched.

That matters here. external-dns and cert-manager are core platform services. A bad config can break DNS or certificate issuance. Having a nonprod Argo CD means those changes can be tested against the nonprod cluster first, using the same GitOps workflow, without modifying production.

Why two Argo CD instances

The platform uses two Argo CD instances:

GKE nonprod Argo CD

GKE prod Argo CDThis is intentional.

At first, one Argo CD might sound simpler. It is one UI, one set of secrets, and one place to manage applications. But once Argo CD starts managing platform components, that simplicity becomes a risk. If the same controller manages both nonprod and prod, then a mistake in a platform app definition can reach both environments from the same control plane.

Keeping them separate gives a few useful properties:

- nonprod issues do not directly affect prod

- platform upgrades can be tested in nonprod first

- repo credentials and SSO settings can differ by environment

- prod can remain slower and more conservative

- nonprod can move faster while the platform is still evolving

The upgrade flow becomes much safer:

upgrade external-dns in nonprod

validate DNS behavior

promote the same change to prodor:

upgrade cert-manager in nonprod

issue a staging certificate

issue a production certificate

then repeat in prodThis is the same reason I want Argo CD itself to be self-managed, but not shared across every environment. The controller is part of the platform, and platform changes need a place to prove themselves.

The platform node pool

The cluster has multiple node pool types prepared:

system

regular

spotThat distinction becomes useful in this step.

Components like external-dns and cert-manager are not normal application workloads. They are platform controllers. If external-dns is unavailable, DNS automation stops. If cert-manager is unavailable, certificate issuance and renewal stop. They do not need many resources, but they should not be scheduled onto the most disposable capacity by accident.

So the platform components use the system pool:

nodeSelector:

janus.io/pool: system

tolerations:

- key: "janus.io/system"

operator: "Equal"

value: "true"

effect: "NoSchedule"The sample app, on the other hand, can use the spot pool:

nodeSelector:

janus.io/pool: spot

tolerations:

- key: "janus.io/spot"

operator: "Equal"

value: "true"

effect: "NoSchedule"That gives the platform a useful split:

system pool: cluster services and controllers

spot pool: cheap, interruptible workloads

regular pool: workloads that should not be on spotThis is not just about saving money. It makes the scheduling intent visible in the manifests.

Shared DNS zone

The shared DNS zone is created by Terraform:

{

"project_id": "google-project-id",

"region": "us-central1",

"zone_name": "g-example-com",

"dns_name": "g.example.com.",

"description": "Delegated public zone for GKE-managed subdomains"

}The zone is shared, but the actors that update it should not be shared.

For nonprod, external-dns gets its own Google service account:

external-dns-nonprod@google-project-id.iam.gserviceaccount.comcert-manager gets its own identity too:

cert-manager-nonprod@google-project-id.iam.gserviceaccount.comThat separation matters. external-dns and cert-manager both need to write DNS records, but they do it for different reasons.

external-dns creates application records:

argocd.nonprod.g.example.com -> Gateway IP

hello.g.example.com -> Gateway IPcert-manager creates temporary ACME challenge records:

_acme-challenge.<hostname> -> challenge tokenThey both touch DNS, but they are separate controllers and should have separate identities.

Terraform access for external-dns

The external-dns access module creates a Google service account, grants DNS access, and binds the Kubernetes service account to the Google service account through GKE Workload Identity.

The module looks like this:

locals {

gsa_email = var.create_gsa ? google_service_account.external_dns[0].email : var.existing_gsa_email

}

resource "google_service_account" "external_dns" {

count = var.create_gsa ? 1 : 0

project = var.project_id

account_id = var.gsa_account_id

display_name = var.gsa_display_name

description = "Google service account used by external-dns via GKE Workload Identity."

}

resource "google_dns_managed_zone_iam_member" "external_dns_zone_access" {

project = var.dns_project_id

managed_zone = var.dns_zone_name

role = var.dns_role

member = "serviceAccount:${local.gsa_email}"

}

resource "google_project_iam_member" "external_dns_project_access" {

project = var.dns_project_id

role = var.dns_role

member = "serviceAccount:${local.gsa_email}"

}

resource "google_service_account_iam_member" "workload_identity_user" {

service_account_id = "projects/${var.project_id}/serviceAccounts/${local.gsa_email}"

role = "roles/iam.workloadIdentityUser"

member = "serviceAccount:${var.project_id}.svc.id.goog[${var.k8s_namespace}/${var.k8s_service_account_name}]"

}The project-level DNS grant is important. At first, it seemed like a managed-zone-level IAM binding should be enough. In practice, the Google provider used by external-dns lists zones first, then matches records to a zone. Without the project-level permission, external-dns can authenticate but still fail with a 403 while listing zones.

The nonprod live stack passes values like this:

{

"project_id": "google-project-id",

"region": "us-central1",

"dns_project_id": "google-project-id",

"dns_zone_name": "g-example-com"

}The important distinction is still there even if both values are currently the same project:

project_id = project where the GKE cluster and GSA live

dns_project_id = project where the Cloud DNS zone livesIf prod later lives in a different project but still writes to a shared DNS project, the same module can be reused.

Installing external-dns with Argo CD

external-dns is installed as a platform app through Argo CD.

The Argo CD Application points at the GitOps repo path:

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: external-dns

namespace: argocd

spec:

project: default

source:

repoURL: THE_REPO_URL

targetRevision: HEAD

path: gke/nonprod/platform/external-dns

helm:

valueFiles:

- values.yaml

destination:

server: https://kubernetes.default.svc

namespace: kube-system

syncPolicy:

automated:

prune: true

selfHeal: trueThe wrapper chart uses the upstream chart as a dependency:

apiVersion: v2

name: external-dns

version: 0.1.0

dependencies:

- name: external-dns

version: 1.20.0

repository: https://kubernetes-sigs.github.io/external-dns/Because this is a wrapper chart, the values are nested under external-dns::

external-dns:

provider:

name: google

nodeSelector:

janus.io/pool: system

tolerations:

- key: "janus.io/system"

operator: "Equal"

value: "true"

effect: "NoSchedule"

serviceAccount:

create: true

name: external-dns

annotations:

iam.gke.io/gcp-service-account: external-dns-nonprod@google-project-id.iam.gserviceaccount.com

sources:

- gateway-httproute

domainFilters:

- g.example.com

policy: upsert-only

registry: txt

txtOwnerId: nonprod-gke

interval: 1m

logLevel: infoThere are a few important details here.

First, the source is gateway-httproute. This makes external-dns read hostnames from Gateway API HTTPRoute resources instead of looking for classic Ingress resources.

Second, the domain filter is the actual managed zone:

domainFilters:

- g.example.comIt is tempting to set this to narrower names like:

qa.g.example.com

stg.g.example.com

nonprod.g.example.comBut those only work as domain filters if they are actual managed zones. In this setup, the managed zone is g.example.com. Later, stricter boundaries can be added by creating delegated child zones with Terraform. For now, the controller needs to match the parent zone.

Third, txtOwnerId should be unique per cluster or environment:

txtOwnerId: nonprod-gkeexternal-dns uses TXT records to track ownership. Nonprod and prod should not share the same owner ID.

Terraform access for cert-manager

cert-manager also needs DNS access, but for a different reason. Since the goal is to use Let’s Encrypt with DNS-01 validation, cert-manager needs to create and clean up ACME challenge TXT records in Cloud DNS.

A separate access module keeps the identity separate from external-dns:

locals {

gsa_email = var.create_gsa ? google_service_account.cert_manager[0].email : var.existing_gsa_email

}

resource "google_service_account" "cert_manager" {

count = var.create_gsa ? 1 : 0

project = var.project_id

account_id = var.gsa_account_id

display_name = var.gsa_display_name

description = "Google service account used by cert-manager via GKE Workload Identity."

}

resource "google_project_iam_member" "cert_manager_dns_access" {

project = var.dns_project_id

role = var.dns_role

member = "serviceAccount:${local.gsa_email}"

}

resource "google_service_account_iam_member" "workload_identity_user" {

service_account_id = "projects/${var.project_id}/serviceAccounts/${local.gsa_email}"

role = "roles/iam.workloadIdentityUser"

member = "serviceAccount:${var.project_id}.svc.id.goog[${var.k8s_namespace}/${var.k8s_service_account_name}]"

}The nonprod module instance uses:

module "cert_manager_access" {

source = "../../../modules/cert-manager-access"

project_id = var.project_id

dns_project_id = var.dns_project_id

gsa_account_id = "cert-manager-nonprod"

gsa_display_name = "cert-manager nonprod"

k8s_namespace = "cert-manager"

k8s_service_account_name = "cert-manager"

}That binds this Kubernetes service account:

cert-manager/cert-managerto this Google service account:

cert-manager-nonprod@google-project-id.iam.gserviceaccount.comInstalling cert-manager with Argo CD

cert-manager is also installed as a platform app.

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: cert-manager

namespace: argocd

spec:

project: default

source:

repoURL: THE_REPO_URL

targetRevision: HEAD

path: gke/nonprod/platform/cert-manager

helm:

valueFiles:

- values.yaml

destination:

server: https://kubernetes.default.svc

namespace: cert-manager

syncPolicy:

automated:

prune: true

selfHeal: true

syncOptions:

- CreateNamespace=trueThe wrapper chart:

apiVersion: v2

name: cert-manager

version: 0.1.0

dependencies:

- name: cert-manager

version: v1.20.2

repository: oci://quay.io/jetstack/chartsThe values file keeps the controller on the system node pool and annotates the service account for Workload Identity:

cert-manager:

crds:

enabled: true

serviceAccount:

annotations:

iam.gke.io/gcp-service-account: cert-manager-nonprod@google-project-id.iam.gserviceaccount.com

global:

leaderElection:

namespace: cert-manager

nodeSelector:

janus.io/pool: system

tolerations:

- key: "janus.io/system"

operator: "Equal"

value: "true"

effect: "NoSchedule"ClusterIssuers

cert-manager needs issuers that describe how certificates should be requested.

For nonprod, there are two ClusterIssuer resources:

letsencrypt-staging

letsencrypt-prodThe staging issuer is used first to validate the flow without burning production rate limits or debugging against real certificates. Once DNS-01 works, the certificate can be changed to the production issuer.

apiVersion: cert-manager.io/v1

kind: ClusterIssuer

metadata:

name: letsencrypt-staging

spec:

acme:

email: platform@example.com

server: https://acme-staging-v02.api.letsencrypt.org/directory

privateKeySecretRef:

name: letsencrypt-staging-private-key

solvers:

- selector:

dnsZones:

- g.example.com

dns01:

cloudDNS:

project: google-project-id

---

apiVersion: cert-manager.io/v1

kind: ClusterIssuer

metadata:

name: letsencrypt-prod

spec:

acme:

email: platform@example.com

server: https://acme-v02.api.letsencrypt.org/directory

privateKeySecretRef:

name: letsencrypt-prod-private-key

solvers:

- selector:

dnsZones:

- g.example.com

dns01:

cloudDNS:

project: google-project-idThese are cluster-wide. There is no need to create one issuer per Gateway or per application.

The model is:

ClusterIssuer = reusable issuing policy

Certificate = hostname-specific request

Gateway = consumes the TLS secretBecause Gateway API is being used directly, the certificate is explicit. This is a little different from older Ingress setups, where cert-manager annotations on an Ingress could create a Certificate indirectly through ingress-shim.

With Gateway API, the clearer pattern is:

Certificate -> Secret -> Gateway listenerGiving Argo CD a real URL

Once external-dns and cert-manager are working, Argo CD can move from a cluster-internal service or temporary access path to a real hostname:

https://argocd.nonprod.g.example.comThe Argo CD installation itself is not the focus of this post. The important part here is the edge configuration around it.

The Argo CD chart values need to know the external URL and that TLS is terminated before traffic reaches the Argo CD server:

argo-cd:

global:

domain: argocd.nonprod.g.example.com

configs:

params:

server.insecure: true

cm:

url: https://argocd.nonprod.g.example.comThen the platform adds a Certificate, Gateway, and HTTPRoute in the argocd namespace.

apiVersion: cert-manager.io/v1

kind: Certificate

metadata:

name: argocd-nonprod-g-example-com

namespace: argocd

spec:

secretName: argocd-nonprod-g-example-com-tls

issuerRef:

name: letsencrypt-prod

kind: ClusterIssuer

dnsNames:

- argocd.nonprod.g.example.com

---

apiVersion: gateway.networking.k8s.io/v1

kind: Gateway

metadata:

name: argocd-nonprod-gateway

namespace: argocd

spec:

gatewayClassName: gke-l7-global-external-managed

listeners:

- name: https

protocol: HTTPS

port: 443

hostname: argocd.nonprod.g.example.com

tls:

mode: Terminate

certificateRefs:

- name: argocd-nonprod-g-example-com-tls

---

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: argocd-nonprod-route

namespace: argocd

spec:

parentRefs:

- name: argocd-nonprod-gateway

hostnames:

- argocd.nonprod.g.example.com

rules:

- backendRefs:

- name: argocd-server

port: 80At this point, the public edge for Argo CD is no longer hand wired.

The HTTPRoute gives external-dns a hostname to publish. The Gateway gives GKE a load balancer and HTTPS listener. The Certificate gives cert-manager a request to issue a Let’s Encrypt certificate. The TLS secret produced by cert-manager is referenced by the Gateway.

Sample app: exposing a real URL on the spot pool

The other validation is a simple application scheduled onto the spot pool. This proves that normal workloads can still use the cheaper capacity while platform controllers stay on the system pool.

This is the all.yaml I would use for the public Gateway API version of the sample.

During early testing, set the issuer to letsencrypt-staging. After the flow works, change it to letsencrypt-prod.

apiVersion: v1

kind: Namespace

metadata:

name: janus-test

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: hello

namespace: janus-test

spec:

replicas: 1

selector:

matchLabels:

app: hello

template:

metadata:

labels:

app: hello

spec:

nodeSelector:

janus.io/pool: spot

tolerations:

- key: "janus.io/spot"

operator: "Equal"

value: "true"

effect: "NoSchedule"

containers:

- name: hello

image: us-docker.pkg.dev/google-samples/containers/gke/hello-app:1.0

ports:

- containerPort: 8080

---

apiVersion: v1

kind: Service

metadata:

name: hello

namespace: janus-test

spec:

selector:

app: hello

ports:

- name: http

port: 80

targetPort: 8080

protocol: TCP

---

apiVersion: cert-manager.io/v1

kind: Certificate

metadata:

name: hello-g-example-com

namespace: janus-test

spec:

secretName: hello-g-example-com-tls

issuerRef:

name: letsencrypt-prod

kind: ClusterIssuer

dnsNames:

- hello.g.example.com

---

apiVersion: gateway.networking.k8s.io/v1

kind: Gateway

metadata:

name: hello-gke-gateway

namespace: janus-test

spec:

gatewayClassName: gke-l7-global-external-managed

listeners:

- name: https

protocol: HTTPS

port: 443

hostname: hello.g.example.com

tls:

mode: Terminate

certificateRefs:

- name: hello-g-example-com-tls

---

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

name: hello-route

namespace: janus-test

spec:

parentRefs:

- name: hello-gke-gateway

hostnames:

- hello.g.example.com

rules:

- matches:

- path:

type: PathPrefix

value: /

backendRefs:

- name: hello

port: 80The important pieces are the relationship between the resources:

HTTPRoute hostname -> external-dns creates A record

Certificate dnsNames -> cert-manager creates TLS secret

Gateway listener -> uses TLS secret and routes to HTTPRoute

Deployment -> runs on spot poolIf the TLS secret does not exist yet, the Gateway may temporarily report an invalid listener. That is expected until cert-manager finishes issuing the certificate.

Validation

The first thing to watch is external-dns:

kubectl -n kube-system logs deploy/external-dns -fA successful run creates both the ownership TXT record and the A record:

Add records: a-hello.g.example.com. TXT ["heritage=external-dns,external-dns/owner=nonprod-gke,external-dns/resource=httproute/janus-test/hello-route"] 300

Add records: hello.g.example.com. A [33.120.22.122] 300Then check the Gateway:

kubectl -n janus-test get gatewayExpected:

NAME CLASS ADDRESS PROGRAMMED

jc-qa-gke-gateway gke-l7-global-external-managed 33.120.22.122 TrueIf local DNS has not caught up yet, test directly with --resolve:

curl -vk --resolve hello.g.example.com:443:33.120.22.122 \

https://hello.g.example.com/The useful part of the response is not just that it returns 200. It also proves TLS and routing are both working:

Server certificate:

subject: CN=hello.g.example.com

issuer: C=US; O=(STAGING) Let's Encrypt; CN=(STAGING) Riddling Rhubarb R12

HTTP/2 200

Hello, world!

Version: 1.0.0Once this works with staging, switching to production is just changing the issuerRef:

issuerRef:

name: letsencrypt-prod

kind: ClusterIssuerWhat changed

The previous cluster could run workloads and receive traffic through Gateway API.

This version can manage the public edge through GitOps.

The difference is significant:

Before:

Gateway gets IP

manual DNS record

manual certificate thinking

curl to test

After:

HTTPRoute declares hostname

external-dns creates DNS record

Certificate declares desired TLS cert

cert-manager issues Let's Encrypt cert

Gateway terminates HTTPSArgo CD now has a real URL:

https://argocd.nonprod.g.example.comA sample app can also be exposed with a real URL and a real certificate, while running on spot capacity.

The platform services themselves run on the system pool, keeping the cluster controllers away from the more disposable workload pool.

Final shape

The platform layer now looks like this:

kube-system

external-dns

cert-manager

cert-manager controller

letsencrypt-staging ClusterIssuer

letsencrypt-prod ClusterIssuer

argocd

Argo CD

Certificate

Gateway

HTTPRoute

janus-test

sample app on spot node pool

Certificate

Gateway

HTTPRouteAnd the responsibility split is clearer:

Terraform:

Cloud DNS zone

Google service accounts

IAM permissions

Workload Identity bindings

Argo CD:

external-dns

cert-manager

ClusterIssuers

Gateway resources

Certificate resources

application manifests

GKE Gateway:

load balancer

HTTPS listener

routing into Services

external-dns:

A records and TXT ownership records

cert-manager:

ACME account

DNS-01 challenge records

TLS secretsNext

With DNS and certificates in place, the next platform decision is access.

Argo CD can now be reached through a real public hostname with TLS. That makes SSO and RBAC the next immediate concern. After that, I want to add the private access path for internal platform tools, likely with the Tailscale operator.

The important milestone here is that the cluster no longer depends on manually copying Gateway IPs into DNS or manually thinking about certificates. The edge is now declared in Kubernetes and reconciled by platform controllers.

That is the point where the cluster starts to feel less like a demo and more like a platform.